Setting up a dynamic raft based Quorum network for development and testing using Helm.

Motivation

Following this informative article provided by my colleague Majd, in which you can learn the basics about Quorum and how to set up a minimal Quorum network using docker containers, we decided to take things a bit further and use our knowledge to provide a dynamic Quorum setup deployable to Kubernetes. In our GitHub repository, we provide a Helm chart and some scripts which aim to make developing and testing on Quorum a lot faster and easier. This article will provide some insights regarding the setup and the functionalities of the project.

Tooling & Prerequisites

For our setup, we need a running Kubernetes cluster and Helm. Helm enables us to deploy a preconfigured network to a running cluster using the concept of charts. This, in combination with some scripts, gives us the ability to dynamically add and remove nodes to and from the network.

Minikube (Kubernetes)

Minikube is one of the multiple tools which you can use to spin up a Kubernetes cluster locally. Other viable options are k3s or kind. Those tools are widely used to develop and test applications on local infrastructure, before deploying them to the target infrastructure.

Helm

Here is a pretty good explanation of what Helm does and how it is connected with Kubernetes:

In simple terms, Helm is a package manager for Kubernetes. Helm is the K8s equivalent of yum or apt. Helm deploys charts, which you can think of as a packaged application. It is a collection of all your versioned, pre-configured application resources which can be deployed as one unit. You can then deploy another version of the chart with a different set of configuration.

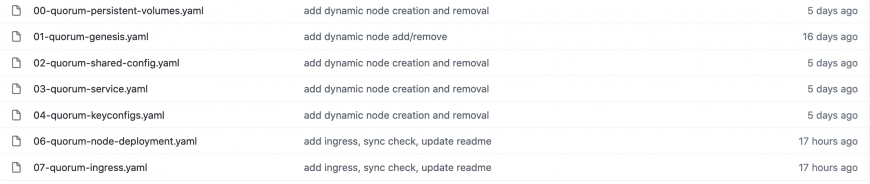

Dynamic Nodes

Now that we know the technical requirements we can take a closer look at the actual repository. The intention for this setup was to use Quorum on Kubernetes. As we did not find any solutions for this besides Qubernetes — the officially supported way to deploy Quorum to Kubernetes — we decided to create our own deployments using Helm. This in particular has the benefit of being more flexible than the officially supported way where Kubernetes deployments get generated once and have to be regenerated and redeployed everytime you need another setup. Using our dynamic approach you will be able to keep the network running while adding or removing nodes.

Quorum Configuration

The following code shows the values.yaml file in which most of the action will take place. Those values are used to dynamically fill a set of templates which you can then reuse for the number of nodes you want in your network. The setup also allows you to modify some additional configurations regarding the deployment such as input parameters for Quorum and Geth.

quorum: version: 20.10.0 storageSize: 1Gi geth: networkId: 10 port: 30303 raftPort: 50401 verbosity: 3 gethParams: --permissioned \ --nodiscover \ --nat=none \ --unlock 0 \ --emitcheckpoints \ --rpccorsdomain '*' \ --rpcvhosts '*' \

The values file additionally takes input for the nodes which are going to be deployed to the cluster. Some of those values are needed for adding a node to a raft based Quorum cluster and others give some additional functionality like enabling or disabling endpoints.

nodes:

node1:

endpoints:

rpc: true

ws: true

ingress:

rpc: true

ws: true

nodekey: 1be3b50b31734be48452c29d714941ba165ef0cbf3ccea8ca16c45e3d8d45fb0

enode: ac6b1096ca56b9f6d004b779ae3728bf83f8e22453404cc3cef16a3d9b96608bc67c4b30db88e0a5a6c6390213f7acbe1153ff6d23ce57380104288ae19373ef

key: |-

{"address":"ed9d02e382b34818e88b88a309c7fe71e65f419d","crypto":{"cipher":"aes-128-ctr","ciphertext":"4e77046ba3f699e744acb4a89c36a3ea1158a1bd90a076d36675f4c883864377","cipherparams":{"iv":"a8932af2a3c0225ee8e872bc0e462c11"},"kdf":"scrypt","kdfparams":{"dklen":32,"n":262144,"p":1,"r":8,"salt":"8ca49552b3e92f79c51f2cd3d38dfc723412c212e702bd337a3724e8937aff0f"},"mac":"6d1354fef5aa0418389b1a5d1f5ee0050d7273292a1171c51fd02f9ecff55264"},"id":"a65d1ac3-db7e-445d-a1cc-b6c5eeaa05e0","version":3}

Under endpoints, RPC, as well as WebSocket endpoints, can be turned on and off. This in particular is used for communication inside the cluster. Additionally — if needed — you can enable the ingress controller to easily access nodes from outside the cluster. If you have Ingress enabled you can access the nodes at http://<cluster-ip>/quorum-node<n>-rpc or http://<cluster-ip>/quorum-node<n>-ws. Nodekey and enode represent the key material for a raft node, they can be generated using the bootnode command provided by Geth. The key value is a Geth Keystore file which holds the account credentials for a Geth account. Only with the combination of bootnode credential and Geth account, the node will be able to function properly. Note that example credentials as provided in the repository should never be reused in any production environment.

Reusing Templates

The above values will then be used to fill in the provided templates. Those templates usually take values for exactly one node/deployment.

By looping through the nodes object of the values file at the top of each template, we can reuse these for every new node added to the values file. Here’s an example for a PersistentVolumeClaim:

{{ $scope := . }}

{{- range $k, $v := .Values.nodes -}}

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: quorum-node{{ $k | substr 4 6}}-pvc

annotations:

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: {{ $scope.Values.quorum.storageSize }}

---

{{ end }}

Deploying and Updating the Chart

Now, let’s start with deploying the network to a running Kubernetes infrastructure.

$ quorum-raft-helm-template % helm install nnodes quorum -n quorum-network NAME: nnodes LAST DEPLOYED: Fri Jan 29 10:40:57 2021 NAMESPACE: quorum-network STATUS: deployed REVISION: 1 TEST SUITE: None

Upgrades, after changing the configuration of your network can be installed by using:

$ helm upgrade nnodes quorum -n quorum-network

Adding New Nodes

Besides adding and removing nodes by modifying the values file manually, we also implemented a more convenient way to do this. For this, we provide multiple scripts that allow us to add and remove specific as well as multiple nodes.

In the following, I will use the addNodes.sh script to dynamically add new nodes to a running cluster.

$ quorum-raft-helm-template % kubectl get pods -n quorum-network NAME READY STATUS RESTARTS AGE quorum-node1-deployment-565ccffd99-tnf4h 1/1 Running 0 6m31s quorum-node2-deployment-dc879f67d-b7549 1/1 Running 0 6m31s quorum-node3-deployment-7cd769cdfc-bzxrm 1/1 Running 0 6m31s

quorum:

version: 20.10.0

storageSize: 1Gi

geth:

networkId: 10

port: 30303

raftPort: 50401

verbosity: 3

gethParams: --permissioned \ --nodiscover \ --nat=none \ --unlock 0 \ --emitcheckpoints \ --rpccorsdomain '*' \ --rpcvhosts '*' \

nodes:

node1:

endpoints:

rpc: true

ws: true

ingress:

rpc: true

ws: true

nodekey: 1be3b50b31734be48452c29d714941ba165ef0cbf3ccea8ca16c45e3d8d45fb0

enode: ac6b1096ca56b9f6d004b779ae3728bf83f8e22453404cc3cef16a3d9b96608bc67c4b30db88e0a5a6c6390213f7acbe1153ff6d23ce57380104288ae19373ef

key: |-

{"address":"ed9d02e382b34818e88b88a309c7fe71e65f419d","crypto":{"cipher":"aes-128-ctr","ciphertext":"4e77046ba3f699e744acb4a89c36a3ea1158a1bd90a076d36675f4c883864377","cipherparams":{"iv":"a8932af2a3c0225ee8e872bc0e462c11"},"kdf":"scrypt","kdfparams":{"dklen":32,"n":262144,"p":1,"r":8,"salt":"8ca49552b3e92f79c51f2cd3d38dfc723412c212e702bd337a3724e8937aff0f"},"mac":"6d1354fef5aa0418389b1a5d1f5ee0050d7273292a1171c51fd02f9ecff55264"},"id":"a65d1ac3-db7e-445d-a1cc-b6c5eeaa05e0","version":3}

node2:

endpoints:

rpc: true

ws: true

ingress:

rpc: true

ws: true

nodekey: 9bdd6a2e7cc1ca4a4019029df3834d2633ea6e14034d6dcc3b944396fe13a08b

enode: 0ba6b9f606a43a95edc6247cdb1c1e105145817be7bcafd6b2c0ba15d58145f0dc1a194f70ba73cd6f4cdd6864edc7687f311254c7555cc32e4d45aeb1b80416

key: |-

{"address":"ca843569e3427144cead5e4d5999a3d0ccf92b8e","crypto":{"cipher":"aes-128-ctr","ciphertext":"01d409941ce57b83a18597058033657182ffb10ae15d7d0906b8a8c04c8d1e3a","cipherparams":{"iv":"0bfb6eadbe0ab7ffaac7e1be285fb4e5"},"kdf":"scrypt","kdfparams":{"dklen":32,"n":262144,"p":1,"r":8,"salt":"7b90f455a95942c7c682e0ef080afc2b494ef71e749ba5b384700ecbe6f4a1bf"},"mac":"4cc851f9349972f851d03d75a96383a37557f7c0055763c673e922de55e9e307"},"id":"354e3b35-1fed-407d-a358-889a29111211","version":3}

node3:

endpoints:

rpc: true

ws: true

ingress:

rpc: true

ws: true

nodekey: 722f11686b2277dcbd72713d8a3c81c666b585c337d47f503c3c1f3c17cf001d

enode: 579f786d4e2830bbcc02815a27e8a9bacccc9605df4dc6f20bcc1a6eb391e7225fff7cb83e5b4ecd1f3a94d8b733803f2f66b7e871961e7b029e22c155c3a778

key: |-

{"address":"0fbdc686b912d7722dc86510934589e0aaf3b55a","crypto":{"cipher":"aes-128-ctr","ciphertext":"6b2c72c6793f3da8185e36536e02f574805e41c18f551f24b58346ef4ecf3640","cipherparams":{"iv":"582f27a739f39580410faa108d5cc59f"},"kdf":"scrypt","kdfparams":{"dklen":32,"n":262144,"p":1,"r":8,"salt":"1a79b0db3f8cb5c2ae4fa6ccb2b5917ce446bd5e42c8d61faeee512b97b4ad4a"},"mac":"cecb44d2797d6946805d5d744ff803805477195fab1d2209eddc3d1158f2e403"},"id":"f7292e90-af71-49af-a5b3-40e8493f4681","version":3}

The addNodes.sh script now allows me to decide how many nodes I want to add to the cluster. I choose to add 2 additional nodes and the script automatically generates the corresponding credentials, adds them to the values file, and upgrades the deployed Helm chart.

node4:

raftId: 4

endpoints:

rpc: true

ws: true

ingress:

rpc: true

ws: true

nodekey: 887e72fb0a5eb31bb250c0bf84b680abd6187af3fffbde19d91947e4c5f3a50f

enode: bbf5516a1ef70986bf95d6c1d94d06b2fe59c68ecf66204a3bdc407396f54840fdffc52180f7a1dda1c62053bf6509719f7797741c967fcad61662963b8e0db3

key: |-

{"address":"c19dbbb10b64e7c57630d8c6bfd981c139af0cdd","crypto":{"cipher":"aes-128-ctr","ciphertext":"8fb8150042f358fb586f130b9bed9dd11738f9006ab04adff88301b1ef8d1cc2","cipherparams":{"iv":"3eb6492f695b7795442feb66e921f67c"},"kdf":"scrypt","kdfparams":{"dklen":32,"n":262144,"p":1,"r":8,"salt":"45504007e2b03d694084cc3095d3b55ee54160cda4e550eec8cb4014cd2119be"},"mac":"9e2d70245ad21a184037f2481972d9ea7a30a68f4fd5ef2725b6f252d1cf1456"},"id":"a346aaff-b3ee-41d3-a6d6-fc485018ea0c","version":3}

node5:

raftId: 5

endpoints:

rpc: true

ws: true

ingress:

rpc: true

ws: true

nodekey: 6e5d10569ed22dc1ddaf71ecde96f5b99776da766e282c9d05a619df383e1a2e

enode: 9e03bc0f583c9b8e5f998ba9e69927a9b35f04bc574341b536721402ecfb0928fd0bc5ed18d8755c6ba097ba40e1463c56a8e1ec287443f64ceca2090b6cc424

key: |-

{"address":"14bcb4b40319d8107e3ab521ac9f6c528faa92a8","crypto":{"cipher":"aes-128-ctr","ciphertext":"e8afeafe3a76678e870ad5c15be5830e6e150d8eb7d13d1137d88254eb03a9ef","cipherparams":{"iv":"7ea5b75d26dfde41810330607be71130"},"kdf":"scrypt","kdfparams":{"dklen":32,"n":262144,"p":1,"r":8,"salt":"4bf4425b9935ed7b97e8a51bd9b5ab795c6c185e39e720bddf597a85b6f8b270"},"mac":"09e3b623dab7624baa992e5ce268501087c25e9ec070332366820e02172c87f9"},"id":"6fff1e93-67b7-4490-804f-8d90c805c785","version":3}

Note the additionally generated value raftId. This value is required for every additional node as it enables the flag “ — raftjoinexisting <raftId>” which is needed to properly add a new node to the initial 3 nodes cluster.

$ kubectl get pods -n quorum-network NAME READY STATUS RESTARTS AGE quorum-node1-deployment-565ccffd99-tnf4h 1/1 Running 0 25m quorum-node2-deployment-dc879f67d-b7549 1/1 Running 0 25m quorum-node3-deployment-7cd769cdfc-bzxrm 1/1 Running 0 25m quorum-node4-deployment-757cd46ffd-vn94p 1/1 Running 0 89s quorum-node5-deployment-55b448b96d-w7rmp 1/1 Running 0 52s

After updating the chart you can see that the new nodes have been added to the cluster. To confirm that the cluster is running and is properly synchronized you can run the following command, which will open a shell to Geth running inside the container. You can then use this open shell to inspect the state of raft (see below) or execute different geth commands. If the resulting nodeActive value is true, the node is properly synced with the cluster and ready to go.

$ kubectl exec -n quorum-network quorum-node1-deployment-565ccffd99-tnf4h -- geth --exec "raft.cluster" attach ipc:etc/quorum/qdata/dd/geth.ipc

[{

hostname: "quorum-node2",

nodeActive: true,

nodeId: "0ba6b9f606a43a95edc6247cdb1c1e105145817be7bcafd6b2c0ba15d58145f0dc1a194f70ba73cd6f4cdd6864edc7687f311254c7555cc32e4d45aeb1b80416",

p2pPort: 30303,

raftId: 2,

raftPort: 50401,

role: "minter"

}, {

hostname: "quorum-node3",

nodeActive: true,

nodeId: "579f786d4e2830bbcc02815a27e8a9bacccc9605df4dc6f20bcc1a6eb391e7225fff7cb83e5b4ecd1f3a94d8b733803f2f66b7e871961e7b029e22c155c3a778",

p2pPort: 30303,

raftId: 3,

raftPort: 50401,

role: "verifier"

}, {

hostname: "quorum-node4",

nodeActive: true,

nodeId: "bbf5516a1ef70986bf95d6c1d94d06b2fe59c68ecf66204a3bdc407396f54840fdffc52180f7a1dda1c62053bf6509719f7797741c967fcad61662963b8e0db3",

p2pPort: 30303,

raftId: 4,

raftPort: 50401,

role: "verifier"

}, {

hostname: "quorum-node5",

nodeActive: true,

nodeId: "9e03bc0f583c9b8e5f998ba9e69927a9b35f04bc574341b536721402ecfb0928fd0bc5ed18d8755c6ba097ba40e1463c56a8e1ec287443f64ceca2090b6cc424",

p2pPort: 30303,

raftId: 5,

raftPort: 50401,

role: "verifier"

}, {

hostname: "quorum-node1",

nodeActive: true,

nodeId: "ac6b1096ca56b9f6d004b779ae3728bf83f8e22453404cc3cef16a3d9b96608bc67c4b30db88e0a5a6c6390213f7acbe1153ff6d23ce57380104288ae19373ef",

p2pPort: 30303,

raftId: 1,

raftPort: 50401,

role: "verifier"

}]

Conclusion

All in all this project should help people to improve testing and development of Quorum networks running on Kubernetes infrastructure. Besides that, it is also a simple and convenient way to gather some experience using Quorum and experiment with various settings, especially if you have problems figuring out the right configuration for your cause. So if you want to take a look at the repository feel free to do so. If you have any suggestions or improvements you might want to create a PR which we will happily review.

51nodes GmbH based in Stuttgart is a provider of crypto-economy solutions.

51nodes supports companies and other organizations in realizing their Blockchain projects. 51nodes offers technical consulting and implementation with a focus on smart contracts, decentralized apps (DApps), integration of Blockchain with industry applications, and tokenization of assets.